This section 5, let us briefly looks at some – In-memory marketing hype vs reality

To see if these really stack up and what alternatives exist for clients who are worried about the disruption, maturity, risks and commercial lock in of the new SAP S/4 HANA, SoH and/or SAP BW HANA platform strategy.

This section could also be called a degree of “Hype busting” as we likely need clearly separate the excellent and pervasive marketing from the technical and solutions deliverable reality.

For the more technical minded reading this item, we shall now drop into some relatively technical discussions related to relational databases and systems design, I make no apologies for doing this as it’s likely important to help reset or gently correct a number of the relative benefits and themes that are normally associated with SAP HANA and/or S/4 HANA “Digital Core” presentations including at recent Sapphire and/or SAP TechEd conferences.

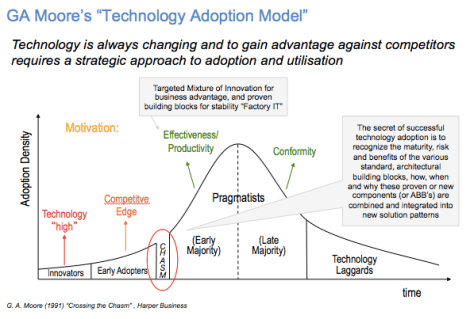

Where are we now in my view with respect to SAP S/4 HANA adoption rates vs a Gartner Type Hype curve:

In this case I’ll use IBM’s DB2 SAP optimized data platform as a point of reference, it’s not that Oracle 12c SAP “AnyDB” platform choices don’t share a number of similar capabilities (I’d naturally say we do it better, more efficiently etc), it’s just that it would be rather technically presumptuous of me to try and represent Oracle’s 12c in-memory cache capabilities without sitting down with them to understand the capability of Oracle 12c and their ongoing development roadmap vs SAP HANA in greater detail for SAP NetWeaver and/or SAP BW 7.x workloads.

Assuming SAP SE commercially actually want to best leverage these AnyDB and/or enable these capabilities (on not), hence I won’t attempt to do this in this item.

“In-Memory” Columnar Myth / Hype Busting – Number 1

Firstly I know it sounds obvious but all databases run in computer memory, we are really simply discussing if the database is organized in a columnar relational form (ideal for analytical / OLAP “multi SQL select” read orientated SQL workloads) or if it is organized in a row relational form that is typically used demanding transactional (OLTP) workloads with higher volumes of “single SQL select, insert, update and/or delete” and/or often row based batch updates, let’s call these more traditional read / write OLTP workloads.

Where 70/30, 80/20, 90/10 read / write ratios are common, with higher write ratios typically often observed for demanding OLTP, batch, planning (SCM) and/or MRP manufacturing workloads.

Indeed the IBM DB2 10.5 BLU “In-memory” columnar capabilities are named after a IBM Research Project at IBM’s US West Coast based IBM Almaden Labs called “Blink Ultra” in 2007 / 8 which effectively observed that by converting prior relational rows to columns in memory, that up to x80 times SQL query reporting times speed up were observed for more demanding OLAP / SQL analytical queries.

A detailed research paper from Guy Loman and his team in IBM Almaden from 2007/8 can be found here, if required.

It’s also true that with DB2 LUW (and/or DB2 on Z/OS) that IBM has spent many years optimizing the use of relatively moderate amounts of DB2 database cache (called DB2 Buffer Pools) and systems memory to provide optimal throughput with justifiable levels of systems platform memory investments, whilst persisting data to disk / SAN Storage and also sustaining ACID database transactional consistency.

Hence the idea that any one vendor has a technology unique in this area is largely marketing hype from my point of view, for sure a particular vendor has marketed this capability very effectively, whilst IBM has been less effective with the marketing and likely more effective with an evolutionary, non disruptive deliverable.

For examples of this DB2 + SAP BW deliverable, refer to a couple of summary you tube videos at Yazaki (a large privately owned Japanese manufacturer of custom auto / car wiring looms) and/or at Knorr Bremse a large manufacturer of advanced braking systems for trains etc.

Yazaki and Knorr Bremse – SAP BW plus DB2 10.5 BLU videos

In-Memory “Commodity Computing, Multi Core is cheap” Myth / Hype Busting – Number 2

DB2 10.5 LUW (Linux, Unix, Windows) has been optimized to take advantage of the more recent multi core processor architectures, including both Intel Xeon and POWER (AIX, Linux, iOS) based architectures whilst offering a choice of Operating System support with ongoing SAP ERP / SAP NetWeaver 7.40 and 7.50 certification, optimization and support through to 2025.

If for example we consider the proven and mature “Symmetrical Multi-Threading” (SMT) capabilities of the IBM PowerVM Hypervisor with either AIX / Unix and/or Linux, these proven capabilities have have been extended over time to provide options to switch between one, two, four or eight threads to best match the application workload instruction flow that are then assigned and executed on multiple CPU cores (up to 12 per socket).

This helps to both increase application throughput and increase IT asset utilization levels.

Indeed in recent IBM Boeblingen Lab tests with DB2 and BLU we tested the relative benefits of SMT 1, 2, 4 and/or 8 for a SAP BW 7.3 and/or 7.4 analytical workload, it was clear during these tests for this particular workload SMT 4 provided an optimal balance of throughput and Server / IT asset utilization (CPU capacity, cycle & thread utilization) whilst avoiding excessive “time slice” based hypervisor thread switching that can significantly hamper the throughput of alternative less efficient hypervisors serving the Intel / Linux or “WINTEL” market demand.

Typically with IBM’s POWER for both DB2 10.5, v11.1 and/or HANA on POWER we observe a typical x1.6-x1.8 times greater throughput per POWER8 core (vs alternative Intel Processors), which is supported in balanced systems design terms by roughly x4 times the memory and/or IO throughput compared to alternative processor architectures.

For example if you have a demanding SAP IS Utilities daily, monthly or quarterly billing batch run for tens of 1,000’s of your Utility clients with SAP ERP 6.0 / SAP NetWeaver, the combination of DB2 10.5 and POWER8 with AIX 7.1 (and/or Linux) is really very hard to beat in batch throughput, availability, reliability and delivered IT SLA / Data Centre efficiency terms.

In parallel considerable and ongoing DB2 development lab efforts have resulted in DB2 10.5 SAP platform solutions that also fully leverage modern “Commodity” Intel based multi core cpu architectures, hence this is not a SAP HANA rdbms unique capability by any means.

During mixed SAP or other ISV application workload testing it’s true to say that some ERP / ISV applications better exploit multi-threaded CPU architectures and modern OS Hypervisors than others.

This remains as true for various SAP Business Suite / SAP NetWeaver (or indeed S/4 HANA) as other ISV workloads, where multi-threaded application re-engineering and optimizing typically takes many months and/or many man years of effort, indeed at one SAPPHIRE (2014) Hasso Plattner (Co-Founder and Chair of the SAP Supervisory board) reflected on the significant and ongoing effort to re-optimize many millions of lines of ABAP code in the existing SAP NetWeaver core platform for S/4 HANA, in addition to the subsequent CDS “push down” initiatives briefly mentioned before.

Also as previously mentioned in my prior Walldorf to West Coast blog, I’m rather reserved about the later upgrade complexity and costs I’d previously observed in a Retek / Oracle Retail scenario which pushed retail merchandising replenishment (RMS) functionality down from the Clients specific application configuration through the Oracle Application tier into the Oracle 10g rdbms tier leveraging PL SQL stored procedures.

For sure this helped to speed up key replenishment batch vs prior IBM DB2 or IMS based M/Frame platforms, however with the later penalty that the overall Retek RMS or WMS solution stack became very tightly coupled and interdependent in version terms.

It also essentially limited (like SAP HANA) the Oracle Retail / Retek platform rdbms choice to one only, where later application version upgrades were really very significant “re-implementations”, conversely the prior segregation and separation of application and rdbms duties in a SAP IS Retail / NetWeaver helped to reduce on mitigate this issue.

Hence in this case the structured development and enablement of SAP’s Core Data Services (CDS) interface between the application and deeper database functionality becomes vital for SAP clients

It’s also true to say the functional depth and breadth of capabilities being built into SAP HANA is very impressive, however this does mean a high rate of change, patching and version upgrades that in turn will need to be aligned to Vora / Hadoop platform versions.

In an Intel environment DB2 10.5 and/or 11.1 LUW also naturally also leverages Intel / Linux and/or Windows “Hyper Threading” (typically dual threads per physical processor core).

In my view the myth here is that “per say” Intel multi core architectures are inherently cheaper than alternative mature, Type 1 (or type 2) hypervisor implementations on IBM’s POWER or IBM System z (refer to this item for a summary of the difference between Type 1 and Type 2 hypervisors)

For example, within IBM we internally consolidated many 1,000’s of prior distributed Unix / AIX / Linux systems and applications onto a limited number of large IBM System z servers running Linux with a highly efficient and mature type 1 hyper visor, this was in fact significantly cheaper and considerably more efficient in Green IT and DC PUE terms, than alternative distributed computing options.

I’m not saying here that Intel / VMware ESX or Linux based hypervisor solutions don’t also provide considerable IT efficiency and platform virtualization opportunities, they do, it’s just that I rarely favour “a one size fits all” binary IT platform strategy.

In my experience a single platform strategy rarely does (for the largest Global Enterprises, it’s likely rather different for small and medium sized enterprises).

Typically implementing “a one size fits all strategy” often forces rather uncomfortable compromises for very Large Enterprise scale clients, who often naturally both virtualize and tier out their server and storage platforms (increasingly also in Hybrid Cloud deployment patterns) to match the requirements of different workloads and/or delivered business driven IT SLA’s and real life practical delivered TCA / TCO’s and cost / benefits.

For sure it’s relatively easy to compare an older or partially virtualized Unix / Oracle environment with a fully virtualized x86, Intel, VMware / Linux scenario (or Intel / Linux Cloud) and demonstrate TCO / TCA savings, however theses often tend towards being potentially rather misleading “apples & pears” comparisons vs comparing one rdbms platform under load against another on the same platform and operating system for the same set of OLAP or OLTP workloads (a much more balanced comparison).

Where the intense IBM focus is really on the most efficient use of the available systems resources (cores, memory & IO) in combination with increased IT agility and responsiveness to help optimise Enterprise Data Center efficiency (some call this Green IT) whilst minimizing the required input power (often measured in Mega Watts) for larger DC’s as measured by the Data Centre Efficiency ratio (PUE).

In this area with the significant consumption of many GB and/or TB of Ram and/or many thousands of cores (for large Enterprise SAP Landscape deployments) the SAP HANA architecture can be very costly indeed in DC Efficiency terms, in particular with limitations currently associated with the virtualization of on premise HANA production environments.

In-Memory “Data Compression Rate and TCO Savings” Myth Busting – Number 3

With DB2 10.5 “Adaptive and Actionable” compression we often observe and sustain 75-85% rates of DB2 DATA compression (call it a 5:1 compression ratio).

In particular with DB2 BLU columnar conversion of targeted SAP BW tables we leverage advanced Huffman encoding, in addition to significantly reducing the requirement for aggregates and indexes resulting in compression rates of 85-90% or more (vs prior uncompressed base lines) depending on the specific nature of the clients SAP BW 7.x tables.

For SAP BW with HANA ratio’s of 3.5 to 4:1 vs uncompressed maybe typically observed (client data depending etc).

Hence in these scenario’s Clients implementing SAP HANA columnar strategies will actually likely observe a reduction in compression rates if they are already using either DB2 10.5 Adaptive and/or DB2 10.5 BLU actionable compression with SAP BW 7.x.

In addition to “Doubling up” the required memory for SAP HANA working space, whilst sizing a combined SSD / HDD (Solid State or Hard Disk Drive Storage) at FIVE times compressed data for HANA database persistence (x4) and HANA logs (x1).

In these scenario’s that client will actually observe a significant net increase in SSD / HDD or SAP HANA TDI based SAN based storage capacity, not a reduction as often claimed in SAP HANA marketing presentations and brochures, in particular when these differences are then multiplied up over multiple SAP environments (Dev, QAS, Production, Dual Site DR, Pre-Production, Training etc for a real life SAP Landscape (operating in either a dual or single track landscape on the path to production from Sandpit / Development, through QAS, Pre-Production, Regression to Production).

Naturally for both SAP HANA and/or indeed DB2 10.5 BLU with SAP BW clients can complete older data house keeping and/or BW NLS archiving, in the case of DB2 BLU using a common BLU NLS archiving capability, for SAP HANA + BW it’s SybaseIQ based BW NLS archiving currently, potentially Hadoop / Vora in the future.

This diagram is often presented at SAP Sapphire and/or TechEd conferences to summarize the target, potential SAP HANA storage savings with Suite on HANA for SAP’s own ERP deployment.

However what is rarely mentioned is that ~ 3.x TB of older table data from a prior Oracle to DB2 (over HPUX, Superdome) SAP ERP migration was removed “cleaned up” in house keeping terms in advance of the ERP on HANA migration, this then gives very different picture in terms of the realized data compression rates vs a prior partially compressed older DB2 environment, however this would then really spoil a good story, SAP HANA marketing chart!

Naturally the required additional new hardware capacity investment that is required is then of significant commercial interest to the multitude of Intel / SAP HANA SAN platform providers (either HANA Appliance and/or consolidated TDI SAN based).

For example I created this simple chart to reflect the relative SAP DB2 BLU and SAP HANA memory sizing ranges, understanding for both technologies it’s also individual client table data dependent.

In my experience this seems to have turned into a bit of a server and storage hardware vendor feeding frenzy with multiple h/w vendors rushing to endorse the whole SAP S/4 HANA adoption story for obvious reasons whilst largely ignoring prior existing, proven, incremental SAP platform solutions!

In-Memory “Future Optimization, SAP Roadmap” Myth Busting – Number 4

In many SAP Enterprise Client engagements, I receive the following comments “but we have been advised that we will miss out on future SAP application optimizations if we don’t migrate to a SAP HANA rdbms and/or S/4 HANA “Digital Core” sooner rather than later”.

These comments are often made irrespective of the actual, real life S/4 HANA adoption rates that are actually a very small fraction (= > ~ 1%) of the installed SAP Business Suite / NetWeaver installed base, such is the largely sales incentive driven pressure for SAP sales and technical sales teams.

At best this is only partially true, where SAP continue to enable and develop a “Core Data Services” (CDS) rdbms abstraction layer that creates a logical structure for the push down and optimisation of SAP HANA “re-optimized” application code to the rdbms database tier.

Consequently and logically IBM with DB2 (and indeed Oracle with 12c) continue to develop, optimize and align DB2 capabilities to SAP NetWeaver CDS functionality, which incidentally is supported and certified with SAP NetWeaver 7.40 and 7.50 with DB2 10.5 (& above) through to 2025.

Additionally typically for IBM Financial Services Clients CDS has been deployed in conjunction with FIORI transactional applications to significantly improve SAP usability whilst protecting the Clients investment in IBM’s System z and/or DB2 over z/OS with Linux or AIX SAP application server capacity.

In practical terms this means that ongoing SAP HANA based SAP ABAP code re-engineering and optimization efforts (there are many many millions of lines of single stack ABAP and/or prior dual stack ABAP / JAVA code) are aligned via CDS to optimized rdbms alternatives like DB2 and/or Oracle 12c in the near and mid term IT investment and planning horizon.

At Sapphire NOW 2016, I picked up a number of initial comments that the “Suite on HANA” SAP HANA Compatibility Views would only be developed and sustained for a finite period (until 2020), allowing Clients more limited time to migrate to new simplified SAP S/4 HANA Enterprise Management code steams and table structures (the new Universal Ledger in Simple Finance as an example).

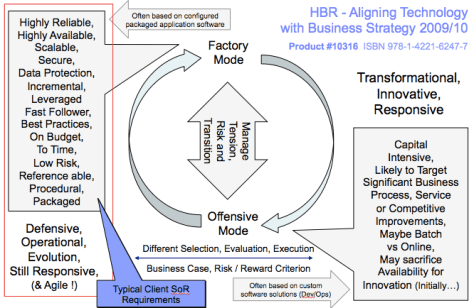

From a personal point of view, deploying an existing deeply customized regional or global SAP NetWeaver / ECC application template that has been “Read / Write” optimized for existing rdbms platforms over many years over HANA (SoH) is likely an application and rdbms platform mismatch.

It’s likely more logical to implement a new simplified S/4 HANA Digital Core “read optimised” application template with over a HANA columnar rdbms platform. This assumes the require application functionality is available and that the business is willing to remove or remediate prior customizations to align to a forward SAP S/4 HANA digital core roll out and transition strategy.

However it is also becoming clear now that in addition to the prior SAP Business Suite / NetWeaver code line (and various PAM defined OS/DB supported combinations) the SAP HANA initiatives have created at least 4 if not more different SAP S/4 HANA “simplified” code lines or releases including:

- Simplified S/4 HANA solutions hosted on the HANA Enterprise Cloud

- The prior S/4 HANA Simple Finance (sFin v1) code, maintenance and release line

- S/4 HANA Enterprise Management and Simple Finance v2 “On Premise” code & release line

- The S/4 HANA Enterprise and Simple Finance “On Premise” code line but HEC hosted

The clear risk for both SAP SE and/or SAP Enterprise Clients is that there is simply a switch from developing, managing, testing and releasing multiple “Any DB” OS/DB choices over a SAP Business Suite / SAP NetWeaver code stream to managing, aligning and releasing multiple S/4 HANA editions and code lines (on or off premise), this is just a different set of complexities to manage, but with a new restriction of prior Client “AnyDB” choices, this is not in my view SAP HANA “simplification”.

In-Memory “Commodity / Cloud Based TCO Reduction” Hype Busting – Number 5

In our industry we are observing the convergence of multiple significant structural changes, where previously we would typically deal relatively speaking, with a single significant structural change every 3-5 years (Desktop Computing, Client / Server, Distributed, the emergence of Eclipse, JAVA, Linux Open Source etc).

Today we have to manage and prioritize limited IT investment resources over multiple concurrent significant structural changes (Mobile Devices, IoT, Public / Hybrid Cloud, Big Data, significant Cyber Security threats), some of us older folks with many years in IT (and a few grey hairs), might suggest some of these themes are a being a little “over hyped” in IT Industry fashion terms, hence we tend to take a cautious view, then asking the harder “but, so what questions ?”, helping to sort out material delivered benefits, ROI and progress from the considerable IT industry hype (it is a bit of a fashion industry also !).

In my view it’s perfectly possible to architect, build and deploy an “at scale” fully virtualized SAP Private Cloud that is every bit as efficient ( if not more so in Data Center Efficiency / PUE terms) than either a Hybrid Public / Private cloud based on AWS (Amazon Web Services and/or MS Azure) platforms based on Intel Commodity “ODM (Original Design Manufacturer) 2 or 4 socket servers.

Indeed the author was directly involved and responsible for the successful deployment of a fully virtualised IBM DB2 SAP Private Cloud in support of ~ 8 Million SAPS, 600+ Strategic SAP environments with ~ 12 Petabytes of fully virtualised and tiered SAP storage capacity spread over Dual Global Data Centre’s with WAN Acceleration to support prior SAP GUI, SAP Portal and/or Citrix enabled SAP Clients leveraging DB2 and PowerVM, AIX, where in practical terms it remains a highly efficient, flexible and scalable SAP platform in support of a 50+ Bn Euro (~ $75 Bn annual t/o) Consumer Products business.

In this case, as mentioned before briefly in a prior blog section, when we completed detailed modelling of a SAP HANA Appliance based deployment over 4 regions and 4 at scale workloads / SAP Landscapes (ECC, APO/SCM, BW, SAP CRM) with dedicated production appliance and VMware ESX / Intel virtualized capacity for smaller non production SAP HANA instances with a shared, common TDI based storage strategy, this carried a DC TCA (Total Cost of Acquisition) premium over the existing Virtualised, Tiered IBM DB2 SAP and IBM POWER deployment strategy of between 1.5 and 1.6 times.

On one SAP HANA video a x10 landscape capacity reduction was indicated, however this really did not correlate in anyway with the actual worked example mentioned above.

For sure, I would not debate the agility, flexibility and initial responsiveness (assuming the required VPN links and security , data encryption needs are met) of AWS, MS Azure and/or indeed IBM’s own SoftLayer Cloud offerings for rapid provisioning of Dev/Ops enabled “Front Office, Big Data and/or next generation Mobile Enabled application workloads including S/4 HANA or indeed SAP NetWeaver with DB2 10.5 and/or CDS which is also available on MS Azure, AWS and/or IBM’s SoftLayer / CMS4SAP platforms.

The crucial factor here is a proper base line and measurement of the “before & after” environments and to avoid the considerable temptation to compare different “apples & pears” generations of SAP platforms that rather “mixes up” the whole TCO analysis and results equation.

I consistently observe Cloud TCO comparisons of prior “Legacy” partially virtualized older generations of Unix / rdbms systems with fully virtualised Intel x86 Cloud environments, these types of old vs new compares can be rather misleading and should in my view, be taken with a large and rather cynical pinch of salt.

Any comparisons TCA / TCO should really use “like generation” CPU / Virtualization platforms and virtualised, tiered storage combined with current generation rdbms platform choices. For example comparing an older version of Oracle (or indeed DB2) over a prior Unix platform generation with a fully virtual x86 Cloud with initial development SAP HANA + SAP BW (including any risks of noisy neighbour, unless dedicated capacity is deployed) scenario can be very misleading whilst potentially creating impressive but also potentially rather misleading headlines during Cloud vendor marketing events and presentations.

In-Memory “IT Agility, Sizing, Solution Responsiveness” Hype Busting – Number 6

After many years of SAP and/or ERP Platform sizing experience, we all understand that sizing complex SAP Systems Landscapes is a combination of science (user input on expected user, transaction volumes, data volumes and expected user & data growth rates, expected roll out rate and planning horizons, workload scalability testing, Client specific PoC’s etc).

Which is then combined with detailed prior experience and judgement on the likely system sizing variation and future growth rates after SAP Application configuration and customization, along with catering for the typical often changing business requirements and/or fluid ERP / SAP roll out schedules by country or region over different SAP ERP and/or related non SAP systems alignment and integration requirements.

In this context it really nets out to one of two sizing strategies in particular if SAP HANA appliance vs TDI strategies are being considered.

- The “Appliance based model”Define the target environment, future growth horizon and then add a safety margin for errors, unexpected changes in inbound demand (an increasingly frequent issue)Then you deploy the targeted 2, 4, 8 or more socket / server appliance building blocks with the appropriate data compression rates and GB / TB of Ram sizing methods

- An On Demand (In IBM we call it Capacity Upgrade on Demand – “CUoD”) ModelWhere you size a scalable platform with Active live and/or “Dark” CUoD capacity that is then activated “on demand” when the actual workload requirement is known vs initial SAP ERP sizing estimates.

- Then on top of these 2 models or approaches you then consider the realistic IT / ERP platform technology / capacity refresh cycle vs expected roll out schedules, workload and data growth rates to ensure you don’t break the target capacity building blocks for peak vs average demand over a typical 3-5 years IT asset write down cycle.

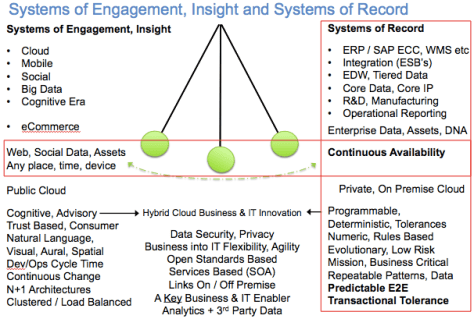

These rules mostly apply irrespective of the SAP Solution Cloud deployment model (Hybrid, Public, Private) selected to match the various development and roll out phases (remembering a chart I defined back in Feb 2005 as below !) to describe this typical Enterprise SAP ERP workload and roll out cycle (just to prove some things don’t really change as much as we might imagine !).

One of my very experienced SAP platform solution architect and sizing colleagues, said that he felt that sizing SAP HANA appliance based landscapes (vs fully Virtualized System p + DB2) was a bit of a “back to the future” experience in SAP / IT platform sizing, server capacity and life cycle / refresh terms.

E.g. there are significant issues and penalties in capacity, disruption and and building block upgrade terms if the initial SAP HANA sizing is incorrect, as in addition to the typically 24-36 month refresh frequency on commodity / Intel x86 platforms.

This means that selecting the wrong sized SAP HANA appliance TYPICALLY means later rather uncomfortable conversations are required with at the CIO, CTO and/or CFO level when these need to be refreshed, often in advance of typical 4-5+ year Enterprise IT asset write down cycles and System of Record technology refresh terms.

In my view, it’s very important for these technology refresh cycles to be factored into any SAP Platform TCO /TCA analysis, in one prior large Retail scenario we used 3-4 Years for Intel / Linux, 4-6 Years for POWER / DB2 and 6-8 Years for M/frame System z, DB2 with either Intel Linux or Power AIX application server capacity, which aligned to the clients scenario and two of their 5 year fiscal write down / budgeting processes.

If you end up refreshing “commodity” technology or with a proliferation of different appliance based solutions (with large volumes of cores in the Data Centre to install, manage, power, cool and maintain with typical DC power to cooling ratio’s of 1.5-1.7 times) this can quickly become a rather costly and inflexible SAP platform strategy.

Personally I prefer to deploy a proven, scalable, flexible virtual platform upfront and then scale as required through Capacity Upgrade on Demand (CUoD) options. This helps to effectively manage business driven changes in requirements, unexpected mergers / acquisitions etc.

However if you have an existing workload that is stable, with clear growth rates and can deploy this over an appropriate appliance building block after a detailed PoC to help with sizing this can also work. It’s then really all about unexpected workload growth, which often driven by mergers or later acquisitions, disposals and/or business driven SAP platform consolidations activity.

Indeed, for example even last weekend I was reading about the continued significant rates of mergers, acquisitions and consolidation that is ongoing in the FMCG / Consumer Products Industry.

In these scenario’s suddenly finding your core SAP ERP “System of Record” platform now needs to scale by a factor of 3 or 4 times (vs 1.5-2 times) is actually not that uncommon as the back office functions for two substantive businesses need to be merged into a single SAP instance / template and platform to realize prior or committed merger / acquisition savings and economies of scale.

It’s for sure a case of buyer beware, the age old, golden rules of making sure your target ERP platform has at least x2 capacity headroom, has never been more true, if you “tight size it” it will for sure hurt later, please refer to the follow SAP HANA – 7 Tips and resources for Cost Optimizing SAP Infrastructure” Blog

https://blogs.saphana.com/2014/11/06/7-tips-and-resources-for-cost-optimizing-sap-hana-infrastructure-2/

For sure Cloud / IaaS based models can help with initial project agility, responsiveness and/or even to help actually size “model” configured environments, but per say it’s still important not to simply assume “a Cloud / Commodity” model is always cheaper than an effectively designed and deployed, virtualised “Private Cloud” or hosted “Private / Hybrid Cloud” model, in particular if you are implementing at scale over a 4-5+ year write down cycle vs 12-36 months.

Disclaimer – This blog represents the authors own views vs a formal IBM point of view

The views expressed in this blog are the authors and do not represent a formal IBM point of view.

They do represent an aggregate of many years (20+) of successful ERP / SAP Platform deployment and IT strategy development experience that is supplemented with many hours of reading, respective DB2 and/or SAP HANA roadmap materials and presentations at various user conferences and/or user groups, in addition to carefully reading input from a range of respected industry / database analyst sources (these sources are respected and quoted).

–

–